In my previous blog, We learned how to deploy an API to the GCP K8s engine, Today we will learn about Kubernetes as an Overview.

Google created Kubernetes (K8s) as part of their internal infrastructure to manage the containerized applications running on their infrastructure.

Kubernetes is an open-source platform designed to automate the deployment, scaling, and management of containerized applications. It's been around for a while now and has become the standard for managing containerized applications in production environments.

What is Kubernetes?

Kubernetes is an orchestration system that automates the deployment, scaling, and management of containers. It provides a unified platform for deploying and managing containers, making it easier for organizations to run and scale their applications. Kubernetes is highly extensible, allowing organizations to customize it to meet their specific needs.

Kubernetes (K8s) is an open-source container orchestration system for automating the deployment, scaling, and management of containerized applications. It works by using a master node to control and manage a group of worker nodes.

How does Kubernetes work?

Kubernetes works by dividing an application into smaller units, called containers. Each container holds a piece of an application, such as a microservice. These containers can be deployed and managed independently, making it easier to scale and manage applications.

Kubernetes uses a declarative approach to manage containers, meaning you define what you want your application to look like and Kubernetes takes care of the rest. This makes it easy to manage complex applications, as you don't need to worry about the details of how containers are deployed and managed..

The main components of a Kubernetes cluster are:

- The API server: The entry point for all administrative tasks. It exposes the Kubernetes API and communicates with the other components.

- etcd: A distributed key-value store that stores the configuration data of the cluster.

- The controller manager: Responsible for maintaining the desired state of the system by making changes to the actual state of the system as necessary.

- The kubelet: Runs on each worker node and communicates with the API server. It is responsible for starting and stopping containers on the node.

- The kube-proxy: Runs on each worker node and provides network connectivity to the containers.

- Scalability: Kubernetes makes it easy to scale your application, either by adding more containers or by increasing the resources assigned to existing containers.

- Resilience: Kubernetes automatically monitors containers and restarts them if they fail, ensuring that your application is always available.

- Portability: Kubernetes can run on a variety of cloud platforms, as well as on-premises. This makes it easier to move your application from one platform to another, reducing vendor lock-in.

- Integration: Kubernetes integrates with a variety of tools and platforms, making it easier to integrate your application with other systems.

- API server: The API server is the central component of the Kubernetes architecture. It provides a RESTful interface for managing the cluster, and is used by other components to interact with the cluster.

- etcd: etcd is a distributed key-value store that Kubernetes uses to store cluster data. This data is used to ensure that the desired state of the application is maintained.

- Scheduler: The scheduler is responsible for scheduling containers to run on worker nodes. It uses data from the etcd store to determine the optimal placement of containers.

- Controller manager: The controller manager is responsible for managing the state of the cluster. It monitors the state of the cluster and takes action to ensure that the desired state is maintained.

- Kubelet: The kubelet is a component that runs on each worker node. It communicates with the master node to receive instructions for deploying and managing containers.

To deploy an application in a Kubernetes cluster, you would create a deployment manifest that defines the desired state of the application, such as the number of replicas and the container image to use. The Kubernetes control plane will then ensure that the actual state of the system matches the desired state by creating the necessary pods and replication controllers.

Another example is if an application running on a node goes down, Kubernetes will automatically create a new pod to replace the failed one. Also, if the load on an application increases, Kubernetes can automatically scale the number of replicas to handle the increased load.

Kubernetes also provides features such as service discovery and load balancing to make it easier to access applications running in the cluster, as well as rolling updates to allow for updates to be made to the system with minimal downtime.

Overall, Kubernetes provides a powerful platform for managing containerized applications at scale, making it easier to deploy, scale, and manage applications in a production environment.

The process of deploying an application in a Kubernetes cluster involves several steps:

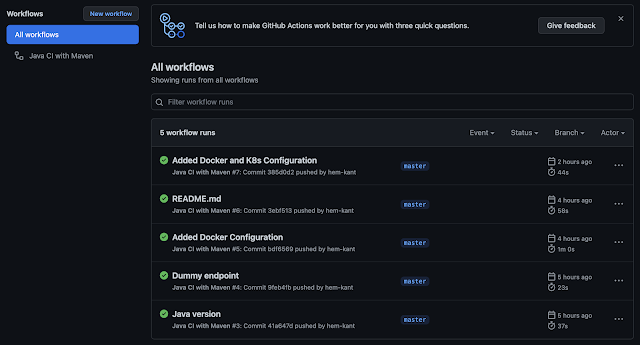

- Containerizing the application: The first step is to containerize the application by creating a Docker image that includes the application code and all its dependencies.

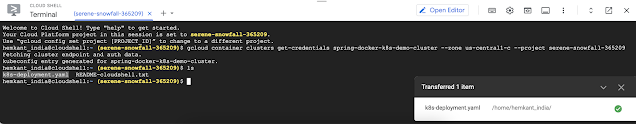

- Creating a deployment manifest: Once the application is containerized, you need to create a deployment manifest that defines the desired state of the application. This includes the number of replicas, the container image to use, and any environment variables or volumes that the application requires.

- Creating a service manifest: A Service manifest defines the desired state of the service. It is responsible for the network communication between the pods and the external world.

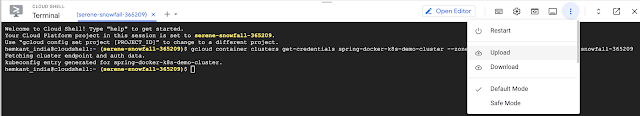

- Applying the manifests: The next step is to apply the manifests to the cluster. This can be done using the kubectl command-line tool, which communicates with the Kubernetes API server to create the necessary resources in the cluster.

- Verifying the deployment: After applying the manifests, you can use the kubectl command-line tool to verify that the deployment was successful. This includes checking that the pods and replication controllers were created and that the desired number of replicas is running.

- Updating the deployment: If you need to make changes to the deployment, such as updating the container image or changing the number of replicas, you can do so by modifying the deployment manifest and reapplying it to the cluster. Kubernetes will then update the actual state of the system to match the desired state.

- Scaling the deployment: If the workload increases, you can scale the deployment by modifying the replicas count in the deployment manifest and reapplying it to the cluster. Kubernetes will then automatically create new pods to handle the increased load.

- Monitoring the deployment: Monitoring the deployment, including the health and performance of the application, is important to ensure that the application is running as expected and to troubleshoot any issues that may arise.

How we can secure the deployment in Kubernetes

- Secure communication: Ensure that all communication between components within the cluster, as well as between the cluster and external systems, is secure. This can be done by using secure protocols such as HTTPS and securing etcd with proper authentication and authorization.

- Network segmentation: Use network policies to segment the network and limit communication between pods and services.

- Role-based access control (RBAC): Use RBAC to control access to the Kubernetes API, and limit the actions that users, groups, and service accounts can perform within the cluster.

- Secrets and configMaps management: Use Kubernetes secrets and configMaps to store sensitive information such as passwords, tokens, and certificates in an encrypted form and avoid storing them in the application code.

- Pod security policies: Use pod security policies to define the security context for pods, including setting resource limits and enabling security features such as AppArmor or SELinux.

- Regular Auditing: Regularly audit the cluster for security risks and compliance issues, and take action as necessary.

- Secure your nodes: Secure the nodes by using a firewall, configuring secure boot, using a trusted platform module (TPM), and securing the operating system.

- Use security add-ons: Use security add-ons such as Kubernetes Network Policy, PodSecurityPolicy, Kubernetes Secrets, Kubernetes ConfigMaps, etc to secure your deployment. Use third-party tools:

- Use third-party tools such as Kube-bench, Kube-hunter, etc to scan and test the cluster for vulnerabilities and misconfigurations.

This deployment file creates a deployment named "my-app" with 3 replicas and runs the container as non-root user, with read-only root file system. The environment variables, SECRET_KEY, and CONFIG_SETTINGS are set using Kubernetes Secrets and ConfigMaps respectively, to store sensitive and non-sensitive information. Also, it uses a pod security policy to set the security context of the pod.