Based on the feedback given in SDL Web Dev Summit in Delhi,India.

This is in continuation of my previous blog where we have used ElasticSearch as storage while publishing components data is getting saved in it.

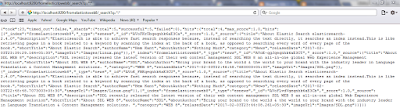

Earlier whenever we need to index any component we first need to create schema model,that creates model based dependency to bypass that we now Serialize the DCP (Dymanic Component preseatation)which is in XML format into JSON and Elastic Search .NET based NEST API has this capability to index JSON directly.

Now, we just need to allow the schema in Dynamic Component Template which we have created to index the data in ElasticSearch.

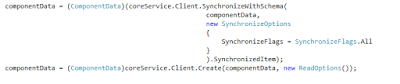

In NEST API we have used

var json = @"{

And Link Resolver TBB is used to generate the Link e;g i have multimedia component attached ,when you published it will resolve the link below is the DCP XML.

Happy coding and keep sharing !!!

This is in continuation of my previous blog where we have used ElasticSearch as storage while publishing components data is getting saved in it.

Earlier whenever we need to index any component we first need to create schema model,that creates model based dependency to bypass that we now Serialize the DCP (Dymanic Component preseatation)which is in XML format into JSON and Elastic Search .NET based NEST API has this capability to index JSON directly.

Now, we just need to allow the schema in Dynamic Component Template which we have created to index the data in ElasticSearch.

In NEST API we have used

var json = @"{

""BookName"": ""Book1"",

""ISBN"": ""978-3-16-148410-0"",

""chapter"" : [

{

""chapter_name"": ""Chapter1"",

""chapter_desc"": ""Before getting into

computer programming, let us first understand computer programs and what

they...""

},

{

""chapter_name"": ""Chapter2"",

""chapter_desc"": ""Today computer

programs are being used in almost every field, household, agriculture, medical,

entertainment, defense..""

},

{

""chapter_name"": ""Chapter3"",

""chapter_desc"": ""MS Word, MS Excel,

Adobe Photoshop, Internet Explorer, Chrome, etc., are...""

},

{

""chapter_name"": ""Chapter4"",

""chapter_desc"": ""Computer programs are

being used to develop graphics and special effects in movie...""

}

]

}";

var

indexResponse = client.LowLevel.Index<string>("fromelasticstoweb8", "esnews",

json);

<blog Title='Testing of model' Id='tcm:6-13290' xmlns:xlink='http://www.w3.org/1999/xlink' xmlns:tcm='http://www.tridion.com/ContentManager/5.0'>

<blogTitle>Testing of model</blogTitle>

<blogDescription>Remove model

dependency</blogDescription>

<image Title='skyline' Id='tcm:6-291' Path='/DigitalAssets/skyline.jpg' xmlns:tridion='http://www.tridion.com/ContentManager/5.0'></image>

<publication Id='tcm:0-6-1' Title='400 Example Site'></publication></blog>

Happy coding and keep sharing !!!