Monitoring Server performance is a really important activity. This will help us to provide the uninterrupted, smooth and better end-user experience it will also help in forecasting the horizontal or vertical scaling server. Today we are going the see how we can use open source services called Beats, ElasticSearch and Kibana to monitor the performance of our servers. Before we move further let's talk about all these services.

- Beats:- Beats is the lightweight data shipper and send the data from multiple machines to Logstash or ElasticSearch. They all are lightweight services which collect the data from your servers and index them in ElasticSearch and with the help of Kibana we can represent that data in the pictorial view. Types of beats and how to install and configure

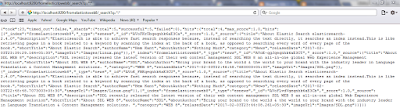

- ElasticSearch: ElasticSearch is a search engine based on Lucene. This comes with extensible API supporting C#, JAVA, CURL, PHP etc. Learn more about Elasticsearch and how to install and configure Elasticsearch.

- Kibana:- Kibana is a visualization application that gets its data from Elasticsearch. It provides a customizable and user-friendly UI in which you can combine various widget types to create your own dashboards. The dashboards can be easily saved, shared, and linked. We can also add the charts embedded HTML in your web-application.

Beats setup consists of:

- Elasticsearch for storage and indexing. Install Elasticsearch.

- Kibana for the UI. Install Kibana.

- One or more Beats. Install the Beats on your servers to capture data. Install Beats.

- Kibana dashboards for visualizing the data.

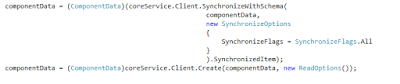

Once you are doing with installation and configuration of all the pre-requisites now let see how its look when beats push the data into ElasticSearch and Kibana use that data for visualization.Make sure you have all the Beats service, ElasticSearch, and Kibana up and running.

Let's see some of them in detail.